I could observe a new thing to think about on a VMware cluster these days.

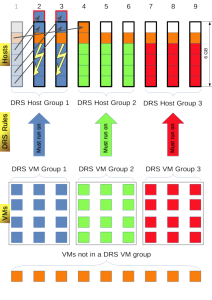

Assume you have a situation as shown in this picture:

As you can see there is a cluster with 9 ESXi hosts and 36 VMs.

For the ease of understanding let´s assume every host has 6 GB of RAM to run VMs and every VM has 1 GB memory configured. (No reservations, no limits and no different priorities are used.)

Because of licensing restrictions, most of the VMs need to run on specific host to which they are licensed to. But there are also VMs with no licensing restrictions. All hosts are in a DRS Host Group corresponding their license. The VMs with license restrictions are in their corresponding DRS VM Groups. To enforce license compliance each DRS VM Group is mapped to a DRS Host Group with a DRS “must run on” rule.

In normal operation everything is fine. The cluster is perfectly balanced and each host has 5 GB of memory used. There are 9 GB memory free in the cluster, which means admission control could be enabled and set to tolerate 1 host failure.

Even in every DRS Host Group there is enough memory to run all VMs which must run on these hosts even if one of the hosts fails.

So everything seems ok. Are you sure?

From my personal experience I can say this causes a lot of trouble.

Assume you want to put host #1 into maintenance mode. This is what will happen:

The problem are the VMs with no DRS Affinity which are equally distributed on every host. While put the host in maintenance mode DRS will evacuate all VMs from Host. While the VM with no license restriction can be moved to every host in the cluster, the VMs with license restrictions cannot. The only allowed hosts for them are Hosts #2 and #3. DRS has to move 4 VMs with in summary 4 GB memory to this hosts, but they only have 2 GB memory free.

Instead of moving the VMs with no license restrictions away from Hosts #2 and #3 to free up memory, the VMs from Host #1 are moved to Hosts #2 and #3 ignoring that memory will then be overcommitted. This results in immediately swapping out memory and cause the VMs to not react any more. It took a long time, sometimes 20 minutes, for DRS to vMotion the VMs with no license restriction away from Hosts #2 and #3 to clean this mess up.

After DRS is finally finished rebalancing, the cluster looks like this:

VMs which don´t react for 20 minutes are of course nothing you want to have in your infrastructure. So what can you do to tell DRS to behave different than this?

I´m sorry to say, but I have no answer for you.

My first thought was to limit concurrent vMotion tasks to give DRS enough time to move away the VMs which have no license restrictions. When I asked this on twitter, i got a hint from James Green which leads me to a Blog Article of Frank Denneman with a description of how to limit concurrent vMotion tasks.

But after thinking about it, I don´t believe this is a good idea. Since reducing the vMotion Abilities is like driving a sports car with the handbrake on. Under normal circumstances the environment benefits from more than one vMotion at a time. Additionally it is not guaranteed that this will give DRS the intelligence to move away the VMs with no license restrictions from host #2 and #3 prior to overcommit memory.

Another hint from a colleague of mine, was to temporarily disable the DRS rules when put the host into maintenance. After the VMs are evacuated, the DRS rules can then be enabled again because normal DRS does not generate such a pressure on vMotion like putting a host into maintenance. This will hopefully give DRS enough time to recognize that it has to move the VMs with no license restrictions also.

Till now I have no solution for this. Therefore comments are very welcome…